In 2026, as search engine competition becomes increasingly intense, the teams that can obtain search result data faster are more likely to discover traffic opportunities first. Whether for SEO, advertising, eCommerce product research, or brand monitoring, more and more teams are relying on Google SERP scrapers to automatically collect search results instead of manually searching and copying data.

This article starts from real user needs and will help you understand how Google SERP scrapers work, the use cases they are suitable for, comparisons of mainstream platforms, and how to choose the most suitable Google SERP Scraper API based on your business stage.

What is a Google SERP Scraper?

A Google SERP Scraper is essentially a tool that can automatically retrieve data from Google search result pages. It helps users collect keyword rankings, ads, related questions, AI Overviews, and other data in bulk and output them in a structured format, without requiring repeated manual searches.

For many teams, the real challenge is not “viewing search results,” but continuously, stably, and at scale obtaining this data over the long term. Especially when you need to monitor thousands of keywords, manual operations become almost impossible. This is why more and more companies are using Google SERP scraper tools to automate the data collection process.

Traditional manual searching has several obvious problems:

● Search results change in real time based on location, device, and language

● Manual recording is extremely inefficient

● High-frequency searches can easily trigger restrictions

● Data cannot be directly integrated into analysis systems

A Google search results scraper can automatically complete these tasks, including simulating searches from different countries, automatically rotating IPs, bypassing restrictions, and outputting structured data.

How does a Google SERP Scraper Work?

A mature Google SERP scraper essentially simulates real user search behavior. After a user enters a keyword, the tool automatically sends a search request to Google and retrieves public information from the search results page, such as rankings, titles, related searches, and People Also Ask content. The originally scattered data on the page is then organized into structured formats (JSON/CSV/Excel, etc.) for easier analysis, filtering, and export.

What truly determines the stability of a tool is not “whether it can scrape,” but “whether it can continuously and reliably retrieve data.” Since Google detects abnormal access behavior, many ordinary scripts are quickly restricted after a short period of use. Professional Google search scraper API usually handle automatic IP rotation, simulate users from different regions, process captchas, and control request frequency, making the overall data collection process more stable.

What Use Cases can Google SERP Scrapers Be Used For?

Search results are not just rankings—they are a comprehensive reflection of user intent, competitive environment, and content quality. Today, more and more businesses rely on SERP data for decision-making.

SEO Rank Tracking

SEO teams need to continuously monitor keyword ranking changes instead of waiting until traffic drops before discovering problems. By scraping Google search results, businesses can detect ranking fluctuations faster.

● Keyword Rankings: Monitor ranking position changes for core keywords across different countries and devices.

● Featured Snippets: Check whether a page has gained featured snippet visibility.

● Competitor Pages: Analyze which competitors are capturing traffic.

● Search Intent Changes: Observe whether the SERP structure has changed.

Competitor Analysis

Many businesses do not lose because of their products, but because of information gaps. Search results are essentially a display window for competitors, and SERP data helps teams quickly identify strategic changes.

● Top 10 Results: Quickly identify major competitors.

● Page Types: Determine whether the content is a blog, product page, or landing page.

● Domain Distribution: Analyze market concentration and competition intensity.

Brand Visibility: Analyze the search visibility of competing brands.

Advertising and Campaign Analysis

Advertising placement data within SERPs has extremely high commercial value, and accurate data becomes an important way to reduce costs.

● Ad Rankings: Monitor whether ads are consistently displayed.

● Ad Copy: Analyze high-CTR advertising content.

● Bid Changes: Observe changes in competition intensity.

● Regional Differences: View ad visibility across different countries.

eCommerce Product Research

More and more cross-border eCommerce teams are using web scraping Google search results to observe market demand changes.

● Product Keywords: Discover growing search demand.

● Google Shopping Data: Analyze trending product visibility.

● User Questions: Analyze real user needs from People Also Ask.

● Search Trends: Identify changes in product popularity.

Best SERP API for Google SERP Scraping

There are many tools on the market, but very few are truly stable and scalable. Below are 5 solutions worth paying attention to in 2026.

CoreClaw

CoreClaw is more like a “ready-to-use” data scraping platform. Without complex configurations, users can directly obtain search result data. In addition to supporting basic Google search results scraper functionality, it also provides visual configuration and automated workflows. For teams that do not want to write code, this significantly lowers the barrier to entry. More importantly, it has been optimized for stability and data structure, making it suitable for long-term data collection projects.

For many teams, the most important issue is not technology, but “whether it can be deployed quickly.” CoreClaw provides easier-to-understand API designs and structured output, reducing a large amount of data cleaning time. The platform also supports multiple export formats such as JSON, CSV, and Excel, making it more suitable for direct integration into analytics systems.

Platform Type: No-code web data scraping platform

Pros:

● No development experience or coding required, quick setup in just three steps

● Built-in 100+ scraping tools (Workers), covering common business scenarios

● Automatically handles anti-bot mechanisms, captchas, and IP rotation to significantly improve scraping stability

● Each worker supports batch task settings, enabling multiple data collection tasks to run simultaneously

● The system automatically performs data cleaning, deduplication, and structured organization, allowing direct import into analytics systems

● Pay only for successful results, failed requests do not consume account credits

Pricing:

● 2,000 free credits for new users

● Starting at $29/month

ScraperAPI

ScraperAPI is more focused on being a general-purpose web scraping platform while also supporting Google SERP scraping. Its advantage lies in its large proxy IP pool, making it suitable for development teams requiring high-volume requests. The platform automatically handles captchas, IP rotation, and browser simulation, reducing much of the maintenance workload. However, for non-technical users, the learning curve is relatively higher.

Platform Type: General web scraping API

Pros:

● Strong automatic proxy rotation capabilities

● Supports JavaScript rendering

● Suitable for large-scale data collection

● Stable concurrent request handling

● Supports multi-language development environments

Pricing:

● 7-day free trial

● Starting at $49/month (US and EU regions only)

Apify

Apify is a flexible data scraping platform that supports both custom crawlers and ready-made templates.

Apify is more like an automated scraping ecosystem. In addition to SERP scraping, it offers a large number of ready-made Actor templates. For users who require customized scraping logic, it provides greater flexibility, although the platform complexity is slightly higher for business teams.

Platform Type: Cloud-based crawler platform

Pros:

● Provides a large number of ready-made Actor templates

● Supports custom scraping logic

● Runs in the cloud with no local deployment required

● Rich community ecosystem

● Can build complex workflows

Pricing:

● $5 free usage credit upon registration for the Apify

● Starting at $29/month

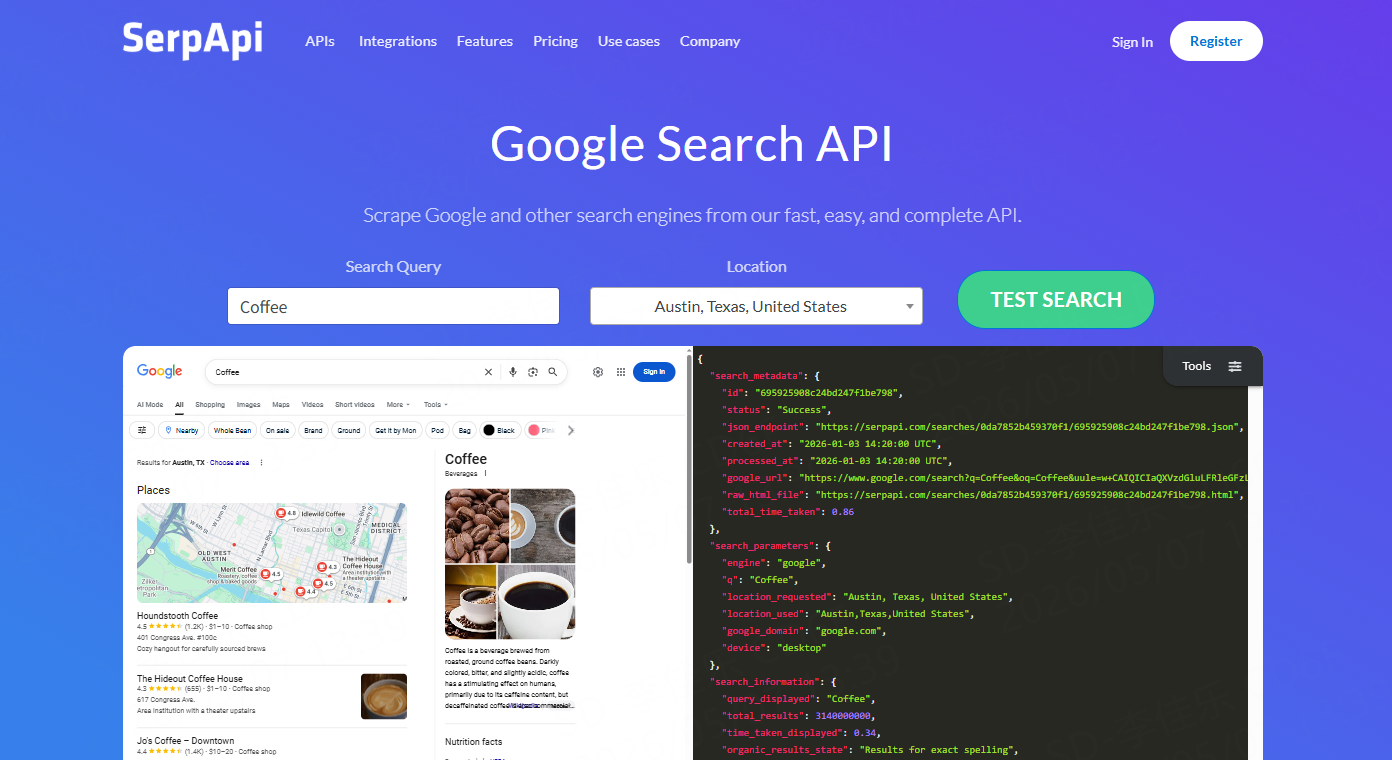

SerpAPI

SerpAPI focuses on search engine data APIs and supports Google, Bing, Yahoo, and multiple other search engines. It is one of the more mature solutions on the market. Its strengths lie in interface stability and standardized data structures, although overall pricing is relatively high, making it more suitable for teams with sufficient budgets and high requirements for data quality.

Platform Type: Professional search engine data API

Pros:

● Broad search engine support

● Standardized and consistent data structure

● Supports multi-region search results

● Fast response speed

● Well-documented and easy to integrate

Pricing:

● 250 free searches per month

● Starting at $25/month

ScrapingBee

ScrapingBee provides a web scraping API with stronger emphasis on browser rendering capabilities, making it more suitable for teams that need to handle dynamic pages. Although it is not specifically a SERP platform, it is a flexible solution for users who need to process both search results and webpage content scraping.

Platform Type: Web scraping API

Pros:

1. Supports JavaScript-rendered pages

2. Automatically handles proxies and browser environments

3. Flexible API suitable for multiple scenarios

4. Simple integration

Pricing:

● 1,000 free API credits

● Starting at $49/month

Google SERP Scraper Comparison Table

Tool | No-Code Support | Stability | Ease of Use | Output Formats | Custom Services | Pricing Model |

CoreClaw | ✅ | High | High | JSON, CSV, Excel, etc. | ✅ Enterprise customization | Charged per successful request |

ScraperAPI | ❌ | High | Medium | JSON | ✅ | Charged by request volume |

Apify | Partial | Medium | Medium | JSON, CSV, XML | ✅ | Subscription + resource-based billing |

SerpAPI | ✅ | High | High | JSON | ✅ | Charged by search volume |

ScrapingBee | ❌ | Medium | High | JSON | ✅ | Charged by API credits |

Benefits of Using a Google SERP Scraper

Once search result data is systematized, the way decisions are made changes significantly. Decisions are no longer based on intuition, but on data.

1. Enables continuous keyword performance monitoring and rapid strategy adjustments, making SEO optimization more directional and measurable

2. Allows fast analysis of competitor strategies, avoiding repeated investment in ineffective content

3. Automated data collection reduces manual operation costs, enabling teams to focus more on strategy rather than execution

4. Data can be accumulated over the long term to build a proprietary search trend database

5. Supports cross-region and multi-keyword analysis, improving the comprehensiveness of market insights

6. Large-scale SERP data accumulation also helps businesses build long-term keyword databases for trend forecasting and content planning

Challenges of Scraping Google SERPs

Although Google SERP scraping has become increasingly mature, the following issues still exist in real-world scraping processes.

1. Anti-Bot Mechanism Restrictions

Overview: Search engines limit frequent requests, resulting in failed data retrieval.

Solution: CoreClaw improves scraping stability through automatic IP rotation, request frequency control, and real browser environment simulation.

2. Unstable Data Structures

Overview: Search result page structures constantly change, which can easily cause field parsing failures.

Solution: Adopting modular parsing and automatic update mechanisms can reduce the impact of page structure changes.

3. Cost Control Issues

Overview: Large-scale keyword scraping continuously increases costs for proxies, servers, and maintenance.

Solution: CoreClaw reduces invalid requests and resource waste through batch scheduling and automatic retry mechanisms.

4. Legal and Compliance Risks

Overview: Improper data collection methods may violate platform policies.

Solution: CoreClaw automatically controls scraping frequency and only retrieves publicly available data, making it more suitable for long-term and stable use.

Google SERP Scraper: Decision Tree

When choosing a Google SERP scraper, the key is not “which has the most features,” but “which best fits your current business stage.” The following simple decision path can help make the selection process easier.

● Do you need a no-code solution?

✅ Yes → Recommend CoreClaw

❌ No → Continue to the next step

● Do you need developer-level flexibility and control?

✅ Yes → Recommend Apify or ScraperAPI

❌ No → Continue to the next step

● Do you need long-term, large-scale, stable scraping?

✅ Yes → Recommend CFrequently asked questionsoreClaw or SerpAPI

❌ No → Continue to the next step

● Do you need multi-region and multi-device data?

✅ Yes → Recommend SerpAPI

❌ No → Continue to the next step

● Do you care more about simple integration and cost control?

✅ Yes → Recommend ScrapingBee

❌ No → Prioritize automated web scraping platforms such as CoreClaw

Conclusion

Search competition in 2026 is no longer just about content competition—it is also about data acquisition capability. Teams that can obtain SERP data faster are usually more likely to identify traffic opportunities, advertising changes, and shifts in user demand.

If your team is looking for a solution that is easier to implement and more suitable for long-term use, tools like CoreClaw that emphasize stability and ease of use are more suitable for business growth than platforms that simply pursue technical complexity.

Frequently asked questions

Disclaimer: Views expressed are solely the author's and do not constitute business commitments.

View Author Profile →